Deep Neural Network Concepts

Evaluation

High Recall Regime

High recall regime refers to a training strategy where the model is optimized to capture as many of relevant positive samples as possible, even if it means including some incorrect predictions( fasle positives).

Recall is a metric that quantifies how well the model finds all the actual positives:

Key points of high recall regime:

- Focus on completeness: try to find all the true positives, minimizing false negatives ( missed relevant samples)

- Tolerance for errors: The model accepts a higher rate of false positives to achieve better recall.

- Use cases: Common in situations where missing a positive is more costly than incorrectly including a negative (e.g., disease diagnosis, fraud detection)

F-score

The F-score, also known as the F-measure, is a common metric for evaluating model performance, especially in classification problems. It combines the model’s precision and recall into a single, balanced score.

In practice, there’s often a trade-off between precision and recall. Increasing precision might decrease recall, and vice versa. The F-score uses a harmonic mean to combine both metrics, providing a better overall assessment of the model’s capabilities.

Where:

- is a non-negative parameter that adjusts the weights of precision and recall

- when , the formula simplifies to the F1-score formula, where precision and recall are given equal weight.

- when , recall is given more weight, and the model will be more inclined to improve recall.

- when , precision is given more weight, and the model will be more inclined to improve precision

- Precision = (Out of all the positive predictions, how many were actually correct?)

- Recall = (Out of all the actual positives, how many were correctly predicted?)

- TP = True Positives

- FP = False Positives

- FN = False Negatives

F1-score

F1-score is a special case of the F-score where .

Normalization

In machine learning, normalization is a technique to rescale data or features into a fixed range, usually [0,1].

Min-Max Normalization is a commonly used method for normalization.

Rescale data into [0,1]

This is the default choice, commonly used in deep learning and feature scaling.

Recalse data into [-1,1]

Often used in neural networks(e.g., when using tanh activation function) because it helps keep data centered around 0, improving convergence speed.

Z-score normalization(Standardization)

Standardization is similar to normalization, but instead of scaling to a fixed range, it transforms data to have a mean() of 0 and a standard deviation() of 1 (i.e., standard normal distribution).

Both Normalizaiton and Standardization help adjust data distribution to imporve numerical stability and prevent issues like gradient explosion or vanishing in deep learning models.

Scaling to Unit Length:

Annother normalization approach is scaling input vectors individually to unit norm (vector length), where the value of each feature is divided by the Euclidean length of the vector.

Overfitting

Increasing training dataset

A good rule of thumb is that the total number of training data points should be at least 2 to 3 times larger than the number of parameters in the neural network, although the precise number of data instances depends on the specific model at hand.

A rule of thumb that has been around for almost as long as the MLP itself is that you should use a number of training examples that is at least 10 times the number of weights.

Early Stopping

Keep monitor the accuracy changing on validation dataset or training dataset, if the accuracy has saturated, we stop training.

Regularization

Regularization is a technique used in machine learning to prevent overfitting by adding a penalty term to the loss function. It helps the model generalize better to unseen data by discouraging excessive complexity.

L1 regularization(Lasso Regression)

Adds the sum of absolute values of weights to the cost/loss function

- is the un-regularized cost/error function Encourages sparsity, meaning some weights become exactly zero, leading to feature selection.

L2 regularization

Adds the sum of squared values of weights to the cost/loss function:

- is the un-regularized cost/error function Penalizes large weights, leading to smaller but nonzero coefficients, helping with stability.

Elastic Net Regularization

A combination of L1 and L2 regularization, balancing sparsity and stability:

Dropout

Dropout is a radically different technique for regularization. Unlike L1 and L2 regularization, dropout doesn’t rely on modifying the cost function. Instead, in dropout we modify the network itself.

With dropout, the training process is modified. We start by randomly(and temporarily) deleting half of the hidden neurons in the network, while leaving the input and output neurons untouched.

Local Minima

Momentum

Momentum is an optimization technique that helps accelerate gradient descent and escape local minima by adding an exponentially weighted moving average of past gradients.

- {% katex %} w_t {% endkatex %} current weight in time t

- {% katex %} w_{t-1} {% endkatext %} weight at previous time step

- {% katex %} \eta {% endkatext %} : learning rate

- {% katex %} \Delta E(w_{t-1}) {% endkatext %}: gradient

- {% katex %} \alpha {% endkatext %}: momentum constant , {% katex %} 0< \alpha < 1 {% endkatext %}, usually use 0.9 .

- {% katex %} \Delta w_{t-1} {% endkatext %} : the update value in previous time step, {% katex %} \Delta w_{t-1} = w_{t-1} - w_{t-2} {% endkatext %}

Sequential Training

Batch gradient descent

All of the training examples are presented to the neural network, the average sum-of-squares error is then computed, and this is used to update the weights. Thus there is only one set of weight updates for each epoch ( pass through all the training examples or choose a batch size)

In this method the errors are computed and the weights updated after each input. This is not guaranteed to be as efficient in learning, but it is simpler to program when using loops, end it is therefor much more common.

Stochastic Gradient Descent (SGD)

- An optimization algorithm that updates model parameters using one random sample at a time.

- Faster but has highter variance in updates.

- Helps escape local minima due to its randomness.

Mini-Batch Gradient Descent

Mini-batch is a trining method that balances Stochastic Gradient Descent(SGD) and Batch Gradient Descent:

- Instead of using one sample(SGD) or the entire dataset (Batch GD), mini-batch splits data into small groups(mini-batches).

- Model updates weights after processing each mini-batch.

Local and Global Relationships

| Feature | Local Relationships | Global Relationships |

|---|---|---|

| Scope | Close, neighboring elements | Distant, non-adjacent elements |

| CNN Capture | Convolutional kernels | Larger kernels, pooling, or other techniques |

| Importance | Basic feature extraction | High-level understanding, context |

Attention Block

The attention mechanism is a fundamental concept in modern neural networks, particularly in natural language processing(NLP), computer vision, and other sequence-based tasks. It allows a model to focus on the most relevant parts of the input data when making predictions, mimicking the way humans pay attention to specific details while ignoring less important information.

What is an Attention Block?

An attention block is a module within a neural network that computes a weighted sum of input features, where the weights are dynamically determined based on the relevance of each feature to the task at hand. These weights are often refered to as attention scores. The attention block enables the model to:

- Focus on the most important parts of the input

- Handle long-range dependencies in sequential data(e.g., in text or time series).

- Improve the interpretability of the model by revealing which parts of the input are being prioritized.

SE - Squeeze and Excitation Block

Focus: Channel attention. It learns to weight the importance of different channels in a feature map.

Mechanism:

- Squeeze: Global average pooling reduces spatial dimensions, creating a channel-wise descriptor.

- Excitation: Two fully connected layers learn channel-wise scaling factors.

- Scaling: The original feature map is multiplied by the learned scaling factors.

ECA - Efficient Channel Attention Block

Focus: Channel attention, with improved efficiency.

Mechanism:

- Global Average Pooling: Similar to SE block.

- 1D Convolution: Instead of fully connected layers, it uses a 1D convolution with adaptive kernel size to capture local cross-channel interactions.

- Scaling: Same as SE block.

Key Improvement: More efficient than SE block due to the 1D convolution.

CBAM - convolutional Block Attention Block

Focus: Both channel and spatial attention.

Mechanism:

- Channel Attention: Similar to SE block, but uses both global average pooling and global max pooling.

- Spatial Attention: Learns to weight the importance of different spatial locations in the feature map using convolutional layers.

- Combination: The channel and spatial attention maps are multiplied with the input feature map.

Loss Functions

Cross_entropy

The cross-entropy loss function, also known as log loss or binary cross-entropy (in the binary case), is a widely used loss function in machine learning, especially for classification tasks. It quantifies the difference between two probability distributions: the predicted probability distribution generated by a model and the true (or target) probability distribution.

Purpose:

- Measures the difference between predicted and true probabilities: Cross-entropy loss penalizes models that produce predictions that are far from the actual labels.

- Drives learning in classification: By minimizing the cross-entropy loss during training, the model learns to output probability distributions that are closer to the true distributions of the classes.

- Well-behaved gradient: The cross-entropy loss function has a nice gradient that makes it suitable for optimization algorithms like gradient descent. This means the model can effectively learn from its errors.

Mathematical Formulation:

- Binary Cross-Entropy (for two classes):

Let:- N be the number of samples.

- be the true label fro the i-th sample (either 0 or 1)

- be the predicted probability of the i-th sample belonging to class 1 (so, is the predicted probability of belonging to class 0).

The binary cross-entropy loss L is calculated as:

Explanation:

- If the true label is 1, the second term becomes zero, and the loss depends on . If the prediction is close to 1 (correct), the loss is close to 0. If is close to 0 (incorrect), the loss becomes very large.

- If the true label is 0 , the first term becomes zero, and the loss depends on . If the prediction is close to 0 (correct, meaning is close to 1), the loss is close to 0. If is close to 1 (incorrect, meaning is close to 0), the loss becomes very large.

- The negative sign ensures that the loss is a positiv value.

- The factor averages the loss over all samples.

- Categorical Cross-Entropy (for multiple classes):

let

- N be the number of samples.

- C be the number of classes.

- be a binary indicator (0 or 1) that is 1 if the i-th sample belongs to class c, and 0 otherwise (one-hot encoded true label).

- be the predicted probability of the i-th sample belonging to class c.

The categorical cross-entropy loss L is calculated as:

Explanation:

- For each sample i, we iterate through all classes c.

- The term is only non-zero when the true label is 1 (i.e., the sample belongs to class c). In this case, the loss depends on .

- Similar to the binary case, if the predicted probability for the correct class is high, the loss is close to 0. If it’s low, the loss is high.

Focal loss

The focal loss function is a cross-entropy with a weighted alpha to balance the uneven proportion of the positive and negative samples and solve the imbalance between easy and hard samples. U-CARFnet adopted the focal loss function to optimize the training performance under severe class imbalance.

The binary cross entropy loss function, binary_crossentropy:

- p is the test result

- y is the actual label

We can observe from above equation that, when using the standard cross-entropy loss function, the loss decreases as the model’s predicted probability increases for positive samples, and decreases as the predicted probability decreases for negative samples.

However, during training, the loss function tends to update slowly for many easy samples (samples that the model already classifies correctly). This can make the optimization process less efficient, potentially preventing the model from reaching its best performance.

U-CARFnet introduced focal loss function can solve such problems.

where can solve the calss imbalance, while can solve the imbalance between easy and hard samples.

Above two separate improvements are conined to form the focal loss function in the form of . This solves the imbalance between positive and negative samples and between the easy and hard samples.

Dice loss

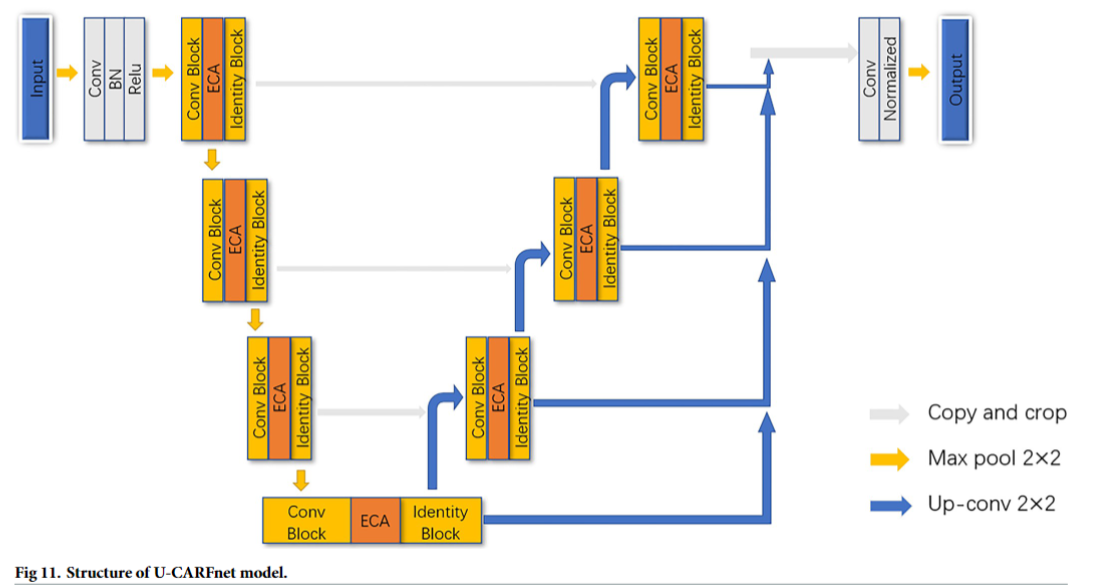

U-CARFnet

Development

U-CARFnet introduced three developments.

1.Attention Mechanism

SENet, CBAM and ECANet.

2.Residual learning module

U-CARFnet applied residual learning modules which was proposed in the ResNet model. To solve infomation loss problems during transmission by traditional convolutional networks or fully connected networks. This structure can accelerate the training of neural networks and greatly enhance the model’s accuracy.

3.Focal Loss Function

model

Above figure shows the residual learning module and the CEIB learning module modified from the deformable convolutional block integrated with the attention mechanism in the U-CARFnet model. In general, the deformable convolutional block can locally perceive each feature in the image using the convolution layer. The local features are integrated at a high level to extract the global feature information. The learning ability of skip connection in the residual learning module is then utilized to transmit the low-level and high-level information to the next stage. The features are extracted using the next group of the convolution operation. Finally, the attention learning module is introduced to optimize the information learning and extraction ability and solve the problem of information error and loss during learning and transmission.

HED

Deep Supervision and Weighted Fusion Supervision

- Deep Supervision

Deep supervision refers to the technique of introducing additional supervision signals at multiple layers of a neural network (typically intermediate layers), rather than applying supervision only at the final outpu layer.For purposes of mitigating gradient vanishing, enhancing feature learning and capturing multi-scale features.

HED introduces side-output layers at multiple convolutional layers of VGGNet( e.g., conv1_2, conv2_2, conv3_3, conv4_3, conv5_3).

Each side-output layer is associated with a supervision signal(e.g., cross-entropy loss) to train the predictions at that layer. - Weighted Fusion Supervision

Weighted fusion supervision refers to the process of combining predictions from multiple layers(e.g., side-outputs from deep supervision) through a weighted fusion mechanism to produce the final output. For purposes of integrating multi-scale information, optimizing final output.

HED fuses the predictions from multiple side-output layers using learned or manually set weights.

The fused result is used as the final edge detection map.

Consensus Sampling

Consensus sampling is a technique that combines predictions or decisions from multiple models or data sources to produce a more reliable and robust output by selecting results that achieve majority agreement.

The core idea is leveraging the principle that agreement among independent models or data sources reduces the impact of individual errors or noise.

Key Methods:

- Majority Voting: For classification tasks, the most frequestly predicted class is selected.

- Weighted Avaraging: For regression tasks, predictions are combined using weighted averages, where weights reflect model confidence or performance.

- Threshold-Based Selection: Results are accepted only if they meet a predefined agreement threshold(e.g., 80% of models agree)

Tensorflow

Layers

Convolutional

Padding

valid

No padding is added to the input. The output feature map is smaller than the input. The output size decreases based on the kernel size and stride.same

The output size stays the same as the input, with padding applied to the borders of the input.

BatchNormalization

BatchNormalization is used to improve the stability, speed, and performance of neural networks during training. It works by normalizing the input of each layer to have a mean of zero and a standard deviation of one. This is done by adjusting and scaling the activations during training. This is done by adjusting and scaling the activations during training.

- Normalization: For each mini-batch during traing, BatchNormalization normalizes the output of the previous layer by subtracting the batch mean and dividing by the batch standard deviation. This helps in reducing internal covariate shift, which is the change in the distribution of network activations due to the change in network parameters during training.

- Scaling and shifting: After normalization, the data is scaled and shifted using two leanable parameters, gamma and beta. These parameters allow the network to undo the normalization if it learns that the original distribution was better for the task.

The Factory Analogy for Batch Normalization

Imagine a neural network as a factory with multiple workshops(layers) in a production line. Each workshop takes the output from the previous workshop, performs some processing, and passes it on to the next one.

Internal Convariate Shift: This is like the raw materials(data) coming into each workshop varying wildly in quality and consistency. One day it’s all large, hight-quality pieces, the next day it’s small, low-quality scraps. Each workshop has to constantly adjust its machines and processes to handle this changing input, making production slow and inefficient.

Batch Normalization: This is like installing a quality control and standardization unit at the entrance of each workshop. This unit takes the incoming raw materials(data from the previous layer), measures their average size and quality, and then processes them to ensure they are all of a consistent, standard size and quality.

By standardizing the input to each workshop(layer), Batch Normalization ensures that each workshop receives a consistent flow of data. This means the workshops don’t have to constantly readjust their processes, making them more efficient.

Batch Normalization acts like a buffer that smooths out the variations in data flow between layers, allowing each layer to focus on learning the core patterns without being disrupted by changes in the overall data distribution.

The key components of batch normalization are:

- Mini-batch statistics:

For a given feature in a mini-batch, we calculate:

Mean() of that feature across all samples in the mini-batch:

where m is the number of samples in the mini-batch, and is the value of the feature for sample i;

Variance( ) of that feature:

This tells us how spread out the values are for the feature.

Normalization:

The calculated mean and variance are used to normalize each feature:

- is the original value for sample i,

- is the mean of the feature in the mini-batch

- is the variance of the feature in the mini-batch

- is a small constant (like ) added to avoid division by zero. This process makes sure that the feature values are centered around 0 with a standard deviation of 1.

Scale and Shift

After normalizing, we scale and shift the values using learnable parameters and to allow the model to adjust:

- is the normalized value

- is the scaling factor (learned by the model)

- is the shifing factor (also learned by the model)

GlobalAveragePooling2D

GLobalAveragePooling2D layer takes a 4D tensor as input ( typically the output of a convolutional layer ) and performs global average pooling across the spatial dimensions( height and width).

How it works:

- It calculates the average value for each channel across the entire spatial dimensions( height and width) of the input tensor.

- It outputs a 2D tensor where each element represents the average value for a specific channel

Key role: - Reduces dimensionality: It significantly reduces the number of parameters in the model by replacing spatial dimensions with channel-wise averages.

- Feature aggregation: It aggregates spatial information into a compact representation for each channel.

- Regularization: It can help prevent overfitting by reducing the number of parameters

Dense

Dense Layers are the most basic and widely used type of layer in neural networks. In a dense layer, every neuron is connected to every neuron in the previous layer.

How they work:

- Linear Transformation: The input is multiplied by a weight matrix and then a bias is added.

- Activation Function: A non-linear function (like ReLU, sigmoid, etc.) is applied element-wise to the result. This introduces non-linearity, which is essential for learning complex patterns.

Key role: - Feature Extraction: Dense layers learn to combine features from the previous layer in complex ways.

- Classification/Regression: Often used as the final layer(s) to make predictions

Upsampling

In-Network Bilinear Interpolation

In-Network bilinear interpolation is a fixed upsampling method used to increase the resolution of feature maps by interpolating values based on weighted averages of neighboring pixels.

The interpolation weights are predefined and do not change during training. It’s computationally lishgtweight, makeing it suitable for real-time applications.

This upsampling method was used in semantic segmentation e.g., FCN, U-Net

1 | import tensorflow as tf |

In-Network Deconvolutional Layer

In-Network deconvolutional layer is a learnable upsampling method, also known as a transposed convolutional layer, that increase the resolution of feature maps by performing the inverse of a convolution operation.

The weights are optimized during training, allowing the layer to adapt to specific tasks. It’s capable of learning complex upsampling patterns, making it suitable for high-precision tasks. It’s more computationally expensive due to learnable weights.

In-Network deconvolutional layer was used in image generation in Generative Adversarial Networks, and in semantic segmentation e.g., SegNet, DeepLab. It is decoder part of autoencoders.

1 | import tensorflow as tf |

kernel_initializer

when construct a convolutional layer, we need to specify the kernel_initializer

1.glorot_uniform (Xavier Uniform) default

It is designed to maintain a balance between variance of activations and gradients across layers, helping to prevent vanishing or exploding gradients.

- = Number of inpurt units to the layer

- = Number of output units

U(a,b): uniform distribution in range of [a, b]

This ensures that the variance of activations remains consistent throughout the network.

How to get a uniform distribution array or matrix in with python

1 | import numpy as np |

When to use:

- works well with sigmoid, tanh, and softmax activations

- Suitable for both shallow and deep networks, but for ReLU-based networks, HeNormal is often preferred.

2.he_normal

It is designed specifically for deep neural networks using ReLU and its variants(e.g., LeakyReLU, ELU). It helps prevent vanishing or exploding gradients by ensuring that the variance of activations remains stable throughout the layers.

ReLU: Rectified Linear Unit

- is the number of input units to the layer

- : is normal distribution, is mean, is standard deviation.

- The variance is derived from keeping the variance of activations stable in ReLU networks.

How to generate the random matrix with normal distribution in python

1 | import numpy as np |

Load Dataset

load dataset from folder

1

2

3

4

5

6

7import tensorflow as tf

train_dataset = tf.keras.utils.image_dataset_from_directory(

"path_to_data",

image_size = (224,224),

batch_size = 32,

label_mode = "categorical" # "int", "categorical", "binary"

)load dataset from NumPy array

1

2

3

4

5

6import numpy as np

# suppose there are 1000 224*224 RGB images

x_train = np.random.rand(1000, 224, 224, 3)

y_train = np.random.randint(0, 10, (1000, )) # 10 classes

train_dataset = tf.data.Dataset.from_tensor_slices((x_train, y_train)).batch(32)load dataset from TFRecord file

if the data is in TFRecord format, the t.data.TFRecordDataset is suggested to parse dataset:1

2raw_dataset = tf.data.TFRecordDataset("path_to_data.tfrecord")

train_dataset = raw_dataset.map(parse_function).batch(32)

data structure of train_dataset

1 | for images, labels in train_dataset.take(1) |

output

(32,224,224,3)(32,num_classes) # 32 images of size 224*224 and RGB mode

Data Augmentation

In TensorFlow, using train_dataset.map(data_augmentation) applies data augmentation dynamically during training. This means that every time an image is fed to the model, it is modified in real-time based on the data_augmentation function (e.g., random flips, rotatio, brightness adjustments). This augmentation happens per epoch, so the images will vary each time the model trains on them, but the size of the dataset remains unchanged.

Difference between dynamic and static augmentation:

- Dynamic Augmentation (via dataset.map(data_augementation)):

- No increase in dataset size: The dataset’s size remains the same. Augmented images are generated on-the-fly during training.

- Memory Efficient: It doesn’t require additional storage since the augmentaed images are generated during training.

- Flexibility: Every epoch sees different augmented versions of the images, improving model robustness.

- Static Augmentation (pre-generating more images):

- Increase in dataset size: Augmentated images are generated and stored beforehand, increaseing the total dataset size.

- Higher Memory Usage: Requires more disk space and memory to store all augmented images.

Type

In TensorFlow, using train_dataset.map(data_augmentation) applies data augmentation dynamically during training.

Processing

Apply image augmentation directly on train_dataset

1

2

3

4

5

6import tensorflow as tf

def augment(image, label):

image = tf.image.random.flip_left_right(image)

image = tf.image.random_brightness(image, max_delta=0.2)

return image, label

train_dataset = train_dataset.map(augment)ImageDataGenerator (deprecated)

GPU is not supported, and cannot work with tf.data.1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16from tensorflow.keras.preprocesing.image import ImageDataGenerator

datagen = ImageDataGenerator(

rescale = 1./255,

rotation_range = 40,

width_shift_range = 0.2,

height_shift_range = 0.2,

shear_range = 0.2,

zoom_range = 0.2,

horizontal_flip = True

)

train_generator = datagen.flow_from_directory(

"path_to_data",

target_size = (224,224),

batch_size = 32,

class_mode = "categorical"

)

Model

Optimizer

optimizers.Adam()

optimizers.SGD

optimizers.RMSprop()

optimizer.Adagrad()

optimizer.Adadelta()

optimizer.FTRL

model training

model.fit( )

fit(

x = None,

y = None,

batch_size = None,

epochs = 1,

verbose = 'auto',

callbacks = None,

validation_split = 0.0,

validation_data = None,

shuffle= True,

class_weight = None,

sample_weight = None,

initial_epoch = 0,

steps_per_epoch = None,

validation_steps = None,

validation_batch_size = None,

validation_freq = 1

)

Tools

TensorBoard

TensorBoard is the built-in graphic tool to show the model and training status.

- add TensorBoard in the training process

1 | log_dir = 'logs/fit' |

- start the tensorboard in command line

1

tensorboard --logdir logs/fit